AI Governance and D&O Liability: What Every Board Needs to Know in 2026

A Briefing for Directors, Officers, and Their Advisors

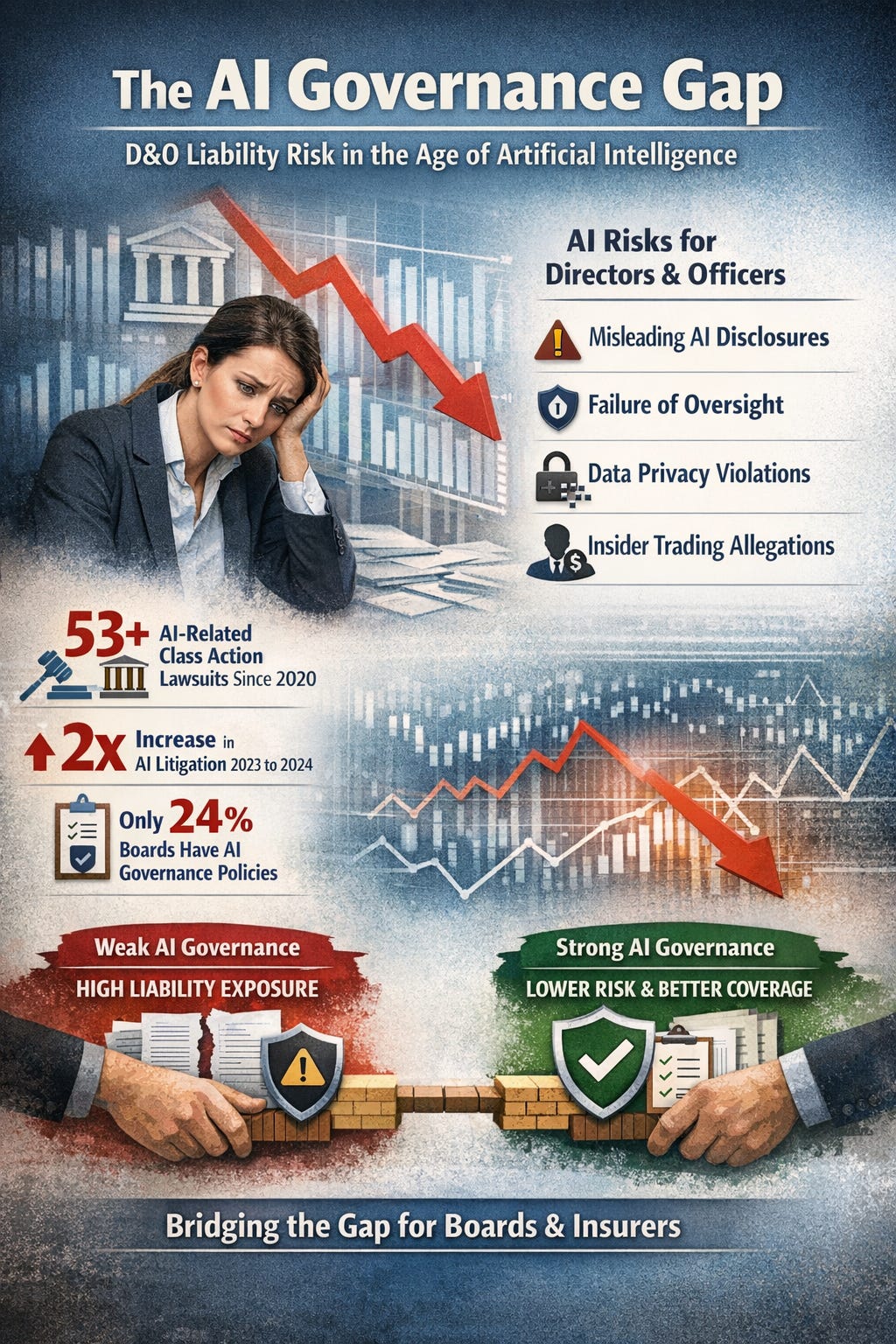

Artificial intelligence has moved from the technology department to the boardroom agenda. But for most boards, it has not yet moved into the governance framework. That gap between AI deployment and AI oversight is now the fastest-growing source of directors and officers liability exposure in American corporate governance.

This white paper presents the evidence: AI-related securities class actions have become the leading category of event-driven litigation, with filings doubling in 2024 and accelerating into 2025. Meanwhile, two-thirds of board directors report limited or no knowledge of AI, and fewer than one in four companies have board-approved AI governance policies.

For D&O insurance professionals, this gap represents both a risk and an opportunity. Boards that can demonstrate structured AI oversight are better positioned for favorable underwriting terms. Boards without governance face uncomfortable renewal conversations, restricted coverage, and unquantified liability exposure.

This briefing provides the data, the legal framework, and a practical roadmap for closing the AI governance gap before it becomes a claims event.

The Litigation Landscape: AI as D&O Risk

AI-related securities class actions (SCAs) have emerged as the dominant category of event-driven litigation in the United States. The trajectory is unambiguous and accelerating.

53+ AI-related securities class actions filed since March 2020, making AI the #1 category of event-driven SCA filings.

The pace of filing has increased dramatically. AI-related SCAs doubled from 2023 to 2024, and the first half of 2025 alone produced 12 filings. Average settlement values for D&O claims have risen 27% to approximately $56 million.

What Triggers AI-Related Securities Claims

AI securities class actions typically allege one or more of the following:

Material misrepresentation about AI capabilities, readiness, or competitive positioning in public disclosures, earnings calls, or marketing materials.

Failure to disclose material AI risks including algorithmic bias, data privacy violations, regulatory exposure, and model failures that could affect financial performance.

Breach of fiduciary duty in overseeing AI deployment without adequate governance structures, risk assessment, or board-level accountability.

Insider trading by directors or officers who traded securities while aware of undisclosed AI-related risks or failures.

The common thread across these claims is the absence of documented board oversight. Plaintiffs do not need to prove the AI system failed. They need to prove the board failed to govern the AI system. The distinction is critical for directors, officers, and the professionals who insure them.

The Governance Gap: By the Numbers

The gap between AI deployment and AI governance at the board level is the central risk factor driving D&O exposure. The data paints a stark picture:

The implications are straightforward: 88% of organizations are deploying AI, but only 25% have board-level policies governing that deployment. The remaining 63% of organizations represent boards operating without documented AI oversight while their companies deploy AI systems that affect customers, employees, financial performance, and regulatory compliance.

From an underwriting perspective, this gap is the risk. From an advisory perspective, it is the opportunity.

The Fiduciary Framework: Why Boards Cannot Delegate AI Oversight

Board fiduciary duties do not create an exception for emerging technology. The duty of care requires directors to inform themselves of material risks facing the organization. The duty of loyalty requires directors to act in good faith to establish reporting systems for material risks. Under the Caremark standard, a board that consciously fails to establish a reporting system for a known material risk faces potential liability for breach of the duty of loyalty.

AI deployment is now a material risk for virtually every company of meaningful size. The question is no longer whether AI is a board-level issue. The question is whether the board has documented evidence that it treated AI as a board-level issue.

The Caremark Connection

In Caremark International Inc. Derivative Litigation (1996), the Delaware Court of Chancery established that directors have an obligation to ensure that adequate information and reporting systems exist. A board that fails to implement any reporting system or, having implemented such a system, consciously fails to monitor its operation, faces potential liability.

AI governance fits squarely within this framework. As AI systems increasingly drive business decisions, affect customer outcomes, and create regulatory exposure, the absence of board-level reporting and oversight on AI constitutes exactly the kind of gap that Caremark liability is designed to address.

What Documented Oversight Looks Like

A defensible AI governance posture includes documented evidence of the following:

Board-level assignment of AI oversight responsibility to a specific committee (audit, risk, or technology) with AI explicitly within its charter.

Regular board reporting on AI deployment, risk, and governance including AI inventory, risk assessments, incident tracking, and compliance status.

Board-approved AI governance policy covering acceptable use, risk appetite, vendor management, and ethical principles.

AI risk assessment methodology aligned with recognized frameworks (NIST AI RMF, ISO/IEC 42001) with documented results.

Board AI education and training records demonstrating that directors have sought to inform themselves about AI risks relevant to the organization.

The presence or absence of this documentation is what separates a board that can defend its oversight from one that cannot.

The D&O Insurance Implications

D&O carriers are responding to the AI litigation trend by incorporating AI governance into their underwriting process. This has practical implications for boards, their advisors, and the brokers who place their coverage.

Underwriting Is Changing

Carriers are increasingly adding AI-related questions to D&O applications and renewal questionnaires. These questions typically address whether the company has an AI governance policy, whether the board receives AI-related reporting, whether AI risk assessments have been conducted, and whether the company has experienced AI-related incidents.

Companies that cannot provide satisfactory answers face three potential consequences: higher premiums reflecting the unquantified AI risk, coverage restrictions or exclusions related to AI claims, or in extreme cases, declination of coverage.

Governance as Premium Mitigation

Conversely, companies that can demonstrate structured AI governance are better positioned during the underwriting and renewal process. Documented governance provides carriers with evidence that the board is exercising reasonable oversight, which supports more favorable risk assessment.

The parallel to cybersecurity is instructive. A decade ago, cyber insurance was a niche product and cybersecurity governance was optional. Today, cyber coverage requires demonstrated security controls, and companies with mature cybersecurity programs receive significantly better terms. AI governance is on the same trajectory, and the window for proactive adoption is narrowing.

$75K–$125K invested in governance today can reduce D&O premium increases, provide documented fiduciary defense in litigation, and create a competitive advantage in the boardroom.

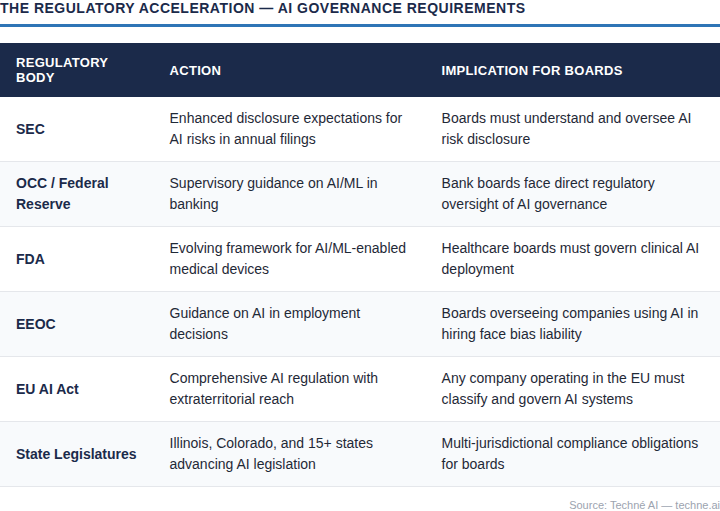

The Regulatory Acceleration

AI governance is not only a market expectation. It is increasingly a regulatory requirement across multiple jurisdictions and industry sectors.

The regulatory trajectory is clear: AI governance is moving from voluntary best practice to mandatory compliance. Boards that establish governance frameworks now position themselves ahead of regulatory requirements rather than reacting to them.

A Practical Roadmap for Board AI Governance

Effective AI governance does not require the board to become technical experts. It requires the board to exercise its oversight function with respect to AI in the same way it exercises oversight over financial reporting, cybersecurity, and other material risk domains.

Phase 1: Assessment (30 Days)

Establish a baseline understanding of the organization’s AI deployment, risk exposure, and governance gaps. This includes a complete inventory of AI systems in use, identification of the highest-risk AI applications, assessment of existing governance policies and structures, and benchmarking against peer organizations and applicable regulatory frameworks.

Phase 2: Framework Design (60 Days)

Build the governance architecture based on the assessment findings. This includes formal assignment of AI oversight to a board committee, development of board-approved AI governance policies, creation of management reporting structures for AI risk, alignment with recognized frameworks such as NIST AI RMF and ISO/IEC 42001, and establishment of AI risk appetite and tolerance levels.

Phase 3: Board Enablement (30 Days)

Ensure the board has the knowledge and tools to exercise effective oversight. This includes board education on AI risks relevant to the organization, development of AI-specific board reporting and metrics, tabletop exercises simulating AI governance scenarios, and documentation of board deliberations and governance activities.

Phase 4: Ongoing Governance

Maintain and mature the governance program over time through regular board reporting on AI metrics and risk, annual policy review and update, continuous monitoring of regulatory developments, periodic governance assessments and certification renewal, and integration of AI governance into enterprise risk management.

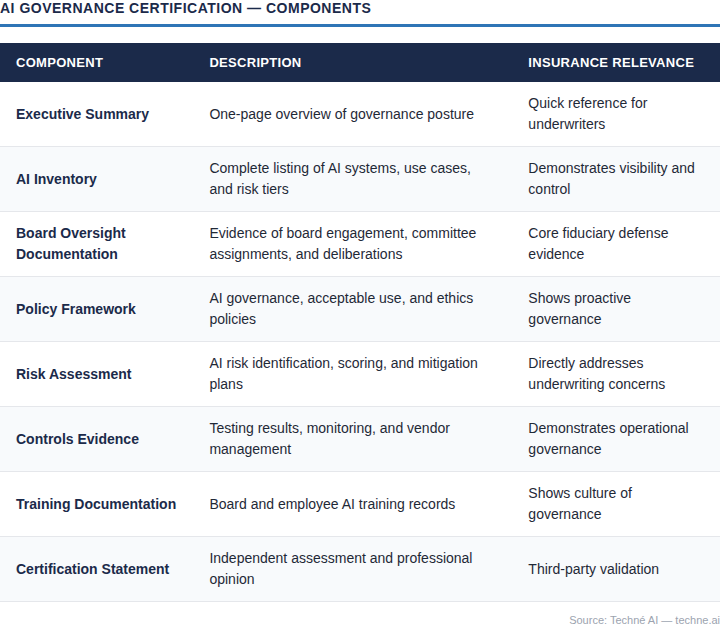

The Role of AI Governance Certification

Just as SOC 2 reports provide documented evidence of cybersecurity controls for cyber insurance placements, an AI Governance Certification provides documented evidence of board-level AI oversight for D&O underwriting and renewal purposes.

An AI Governance Certification includes:

The certification creates a documented record that can be submitted to carriers as part of the D&O renewal process, presented to regulators in the event of inquiry, and produced in litigation as evidence of reasonable board oversight.

The Window Is Narrowing

AI governance is not a future concern. It is a present obligation. The litigation has already begun. The regulatory frameworks are already taking shape. The underwriting questions are already being asked.

Boards that act now to establish documented AI governance protect their directors from personal liability, position their companies for favorable D&O terms, and create a defensible record of fiduciary diligence that will serve them in any future inquiry.

The cost of governance is a fraction of the cost of its absence. The question for every board is not whether to govern AI, but whether they can afford not to.

About Techné AI

Techné AI is an AI governance advisory firm that helps boards, executive teams, and their advisors build defensible AI oversight structures. Founded by Khullani Abdullahi, J.D., Techné AI combines legal expertise in fiduciary duty and corporate governance with deep technical fluency in artificial intelligence risk management.

Services include enterprise AI governance program builds, board AI governance training, AI Governance Readiness Assessments, AI Governance Certification for D&O renewal support, and fractional Chief AI Governance Officer engagements.

Disclaimer: This white paper is provided for informational purposes only and does not constitute legal advice. Organizations should consult with qualified legal counsel regarding their specific AI governance obligations and D&O insurance considerations.